Data Technical Debt: 2022 Data Quality Survey Results

| As a manager, the quality of the data available to you has a direct impact on your ability to make effective decisions. I ran an informal survey exploring the state of data quality within organizations from April 11 to May 22, 2022. The survey received 66 responses in total. This blog posting shares the key findings of that survey. Figure 1 explores the perceived importance of data within organizations. This year 95% of respondents indicated that data was considered to be an important asset within their organizations, which is consistent with previous studies that I have run in the past. However, only 54% indicated that they were measuring data quality, which tells me that in many organizations “data is an asset” is merely rhetoric. Figure 1. How important is data to your organization?

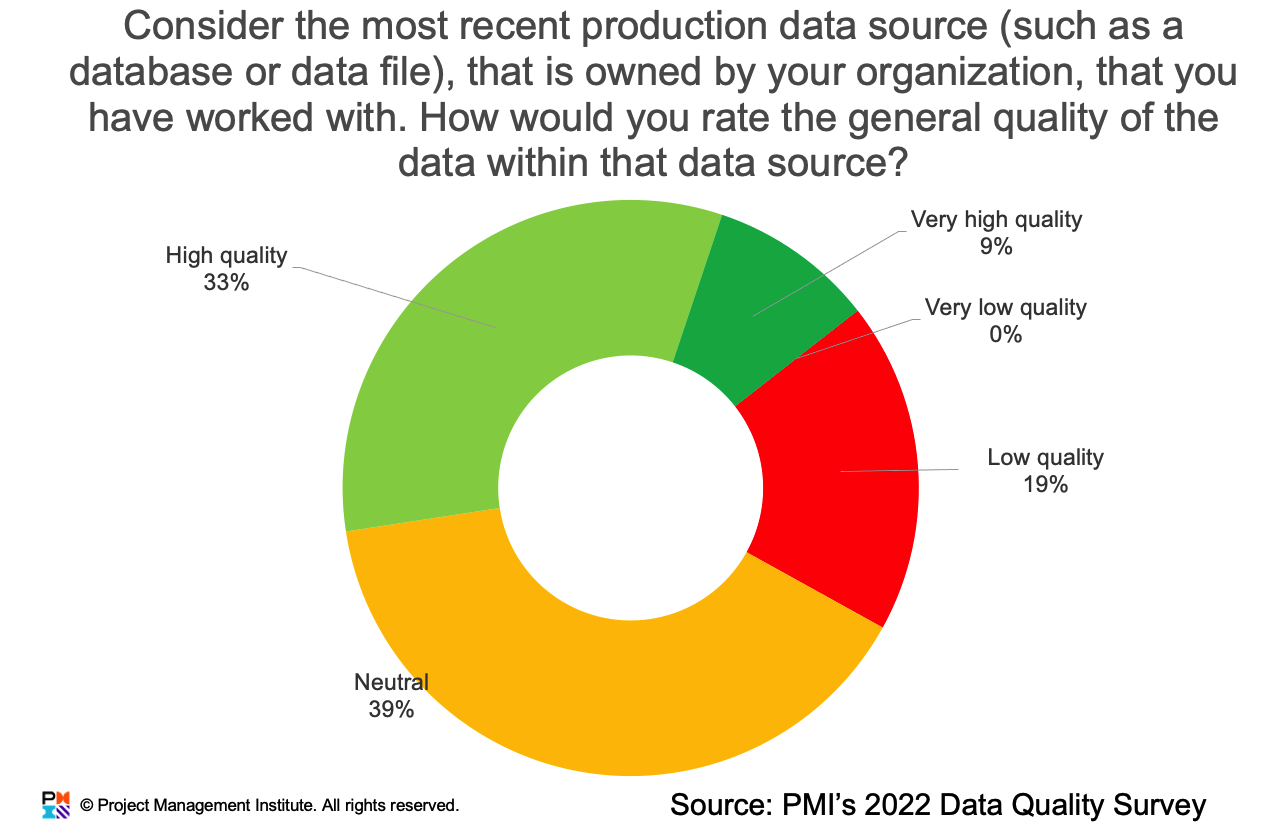

Data Technical DebtThe survey explored issues surrounding data technical debt (DTD), which is a measure of level of data quality (DQ) problems within a data source. Figure 2 summarizes the results of a question that explored the quality of the most recently accessed data source by the respondent. Only 42% of respondents indicated that the data quality was high or very high, and 19% indicated that the data quality was low. Clearly room for improvement. Figure 2. Quality of production data.

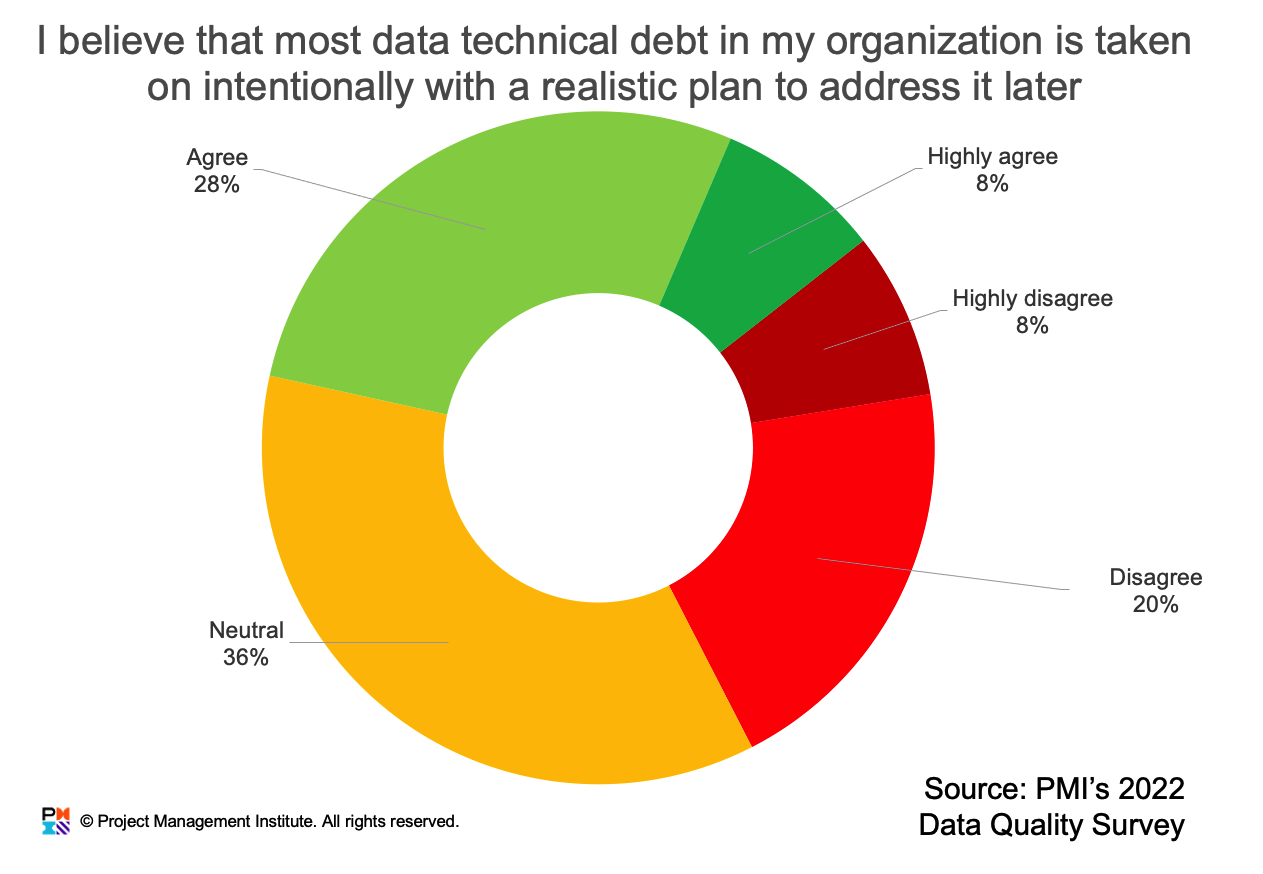

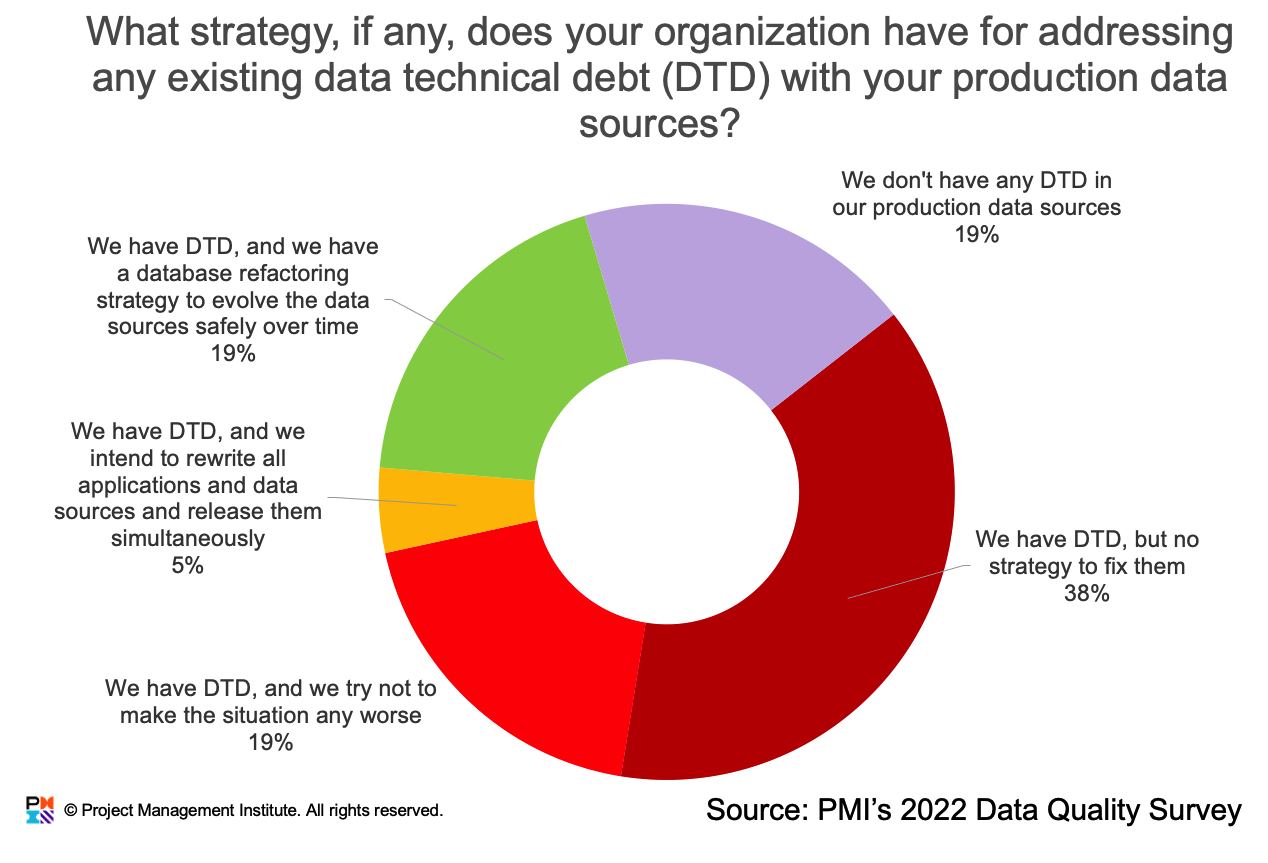

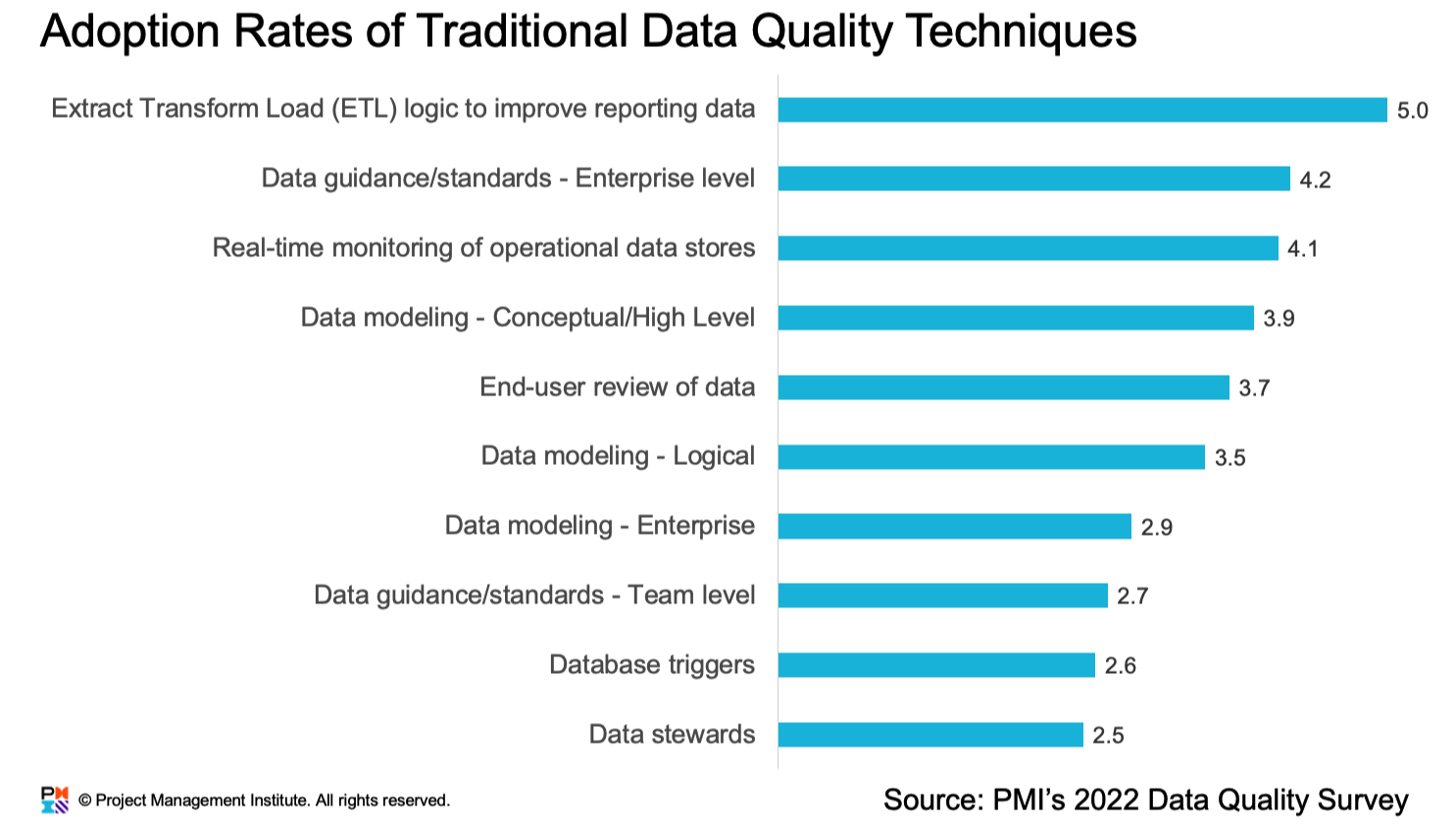

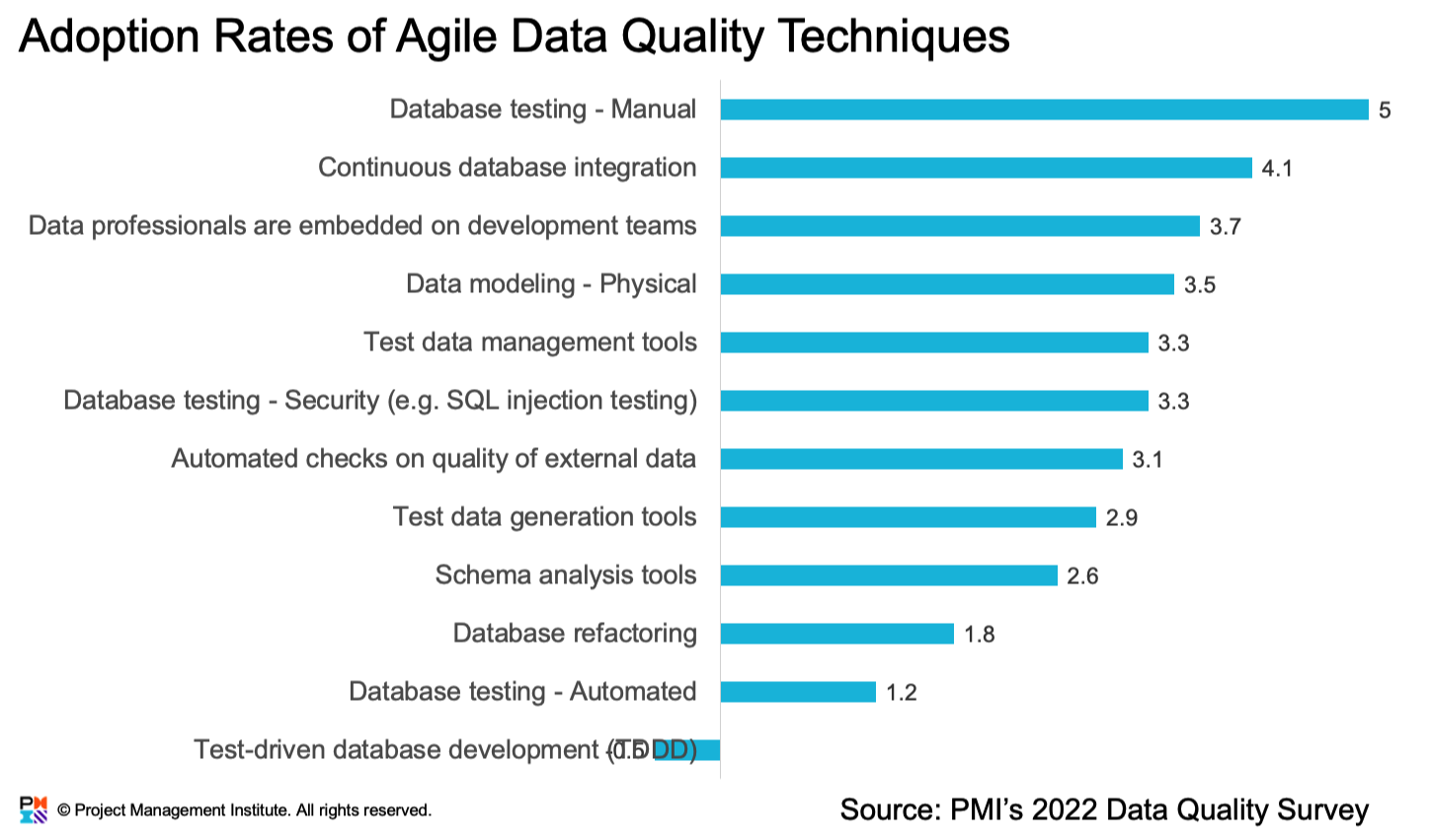

Figure 3 explores whether DTD is taken on intentionally, which is a management decision, with only 36% of respondents providing positive answers. Once again, this is an indication that there is significant room for improvement in many organizations. Figure 3. Is data technical debt taken on intentionally? Addressing Data Technical DebtI also explored whether organizations were addressing DTD effectively. Figure 4 summarizes the result of the question that explored whether organizations had a DTD strategy in place. Figure 5 summarizes the results of a question about the adoption rate of traditional data quality strategies and Figure 6 the adoption rate of agile data quality strategies. In general agile quality strategies are more effective in practice than traditional strategies. Figure 4. Do you have a strategy to address data technical debt?

Figure 5. Adoption rate of traditional data quality techniques.

Figure 6. Adoption rates of agile data quality techniques.

|

Is Technical Debt A Management Problem? Survey Says...

|

From October 25 to November 28, 2021 I ran a survey exploring issues around technical debt which received 166 responses. Of the respondents, 28% were non-management, 37% were project managers, and 35% were senior managers. In the survey we used the following definition: Technical debt refers to the concept of an imperfect technical implication, typically the result of a trade-off made between the benefit of short-term delivery and long-term value by a software development project team. Some people like to think of technical debt as the work you must do tomorrow because you took a shortcut to deliver today. Like financial debt, technical debt is accrued over time, reduces your ability to act, and may need to be paid down in the future. Here are my thoughts based on the survey results:

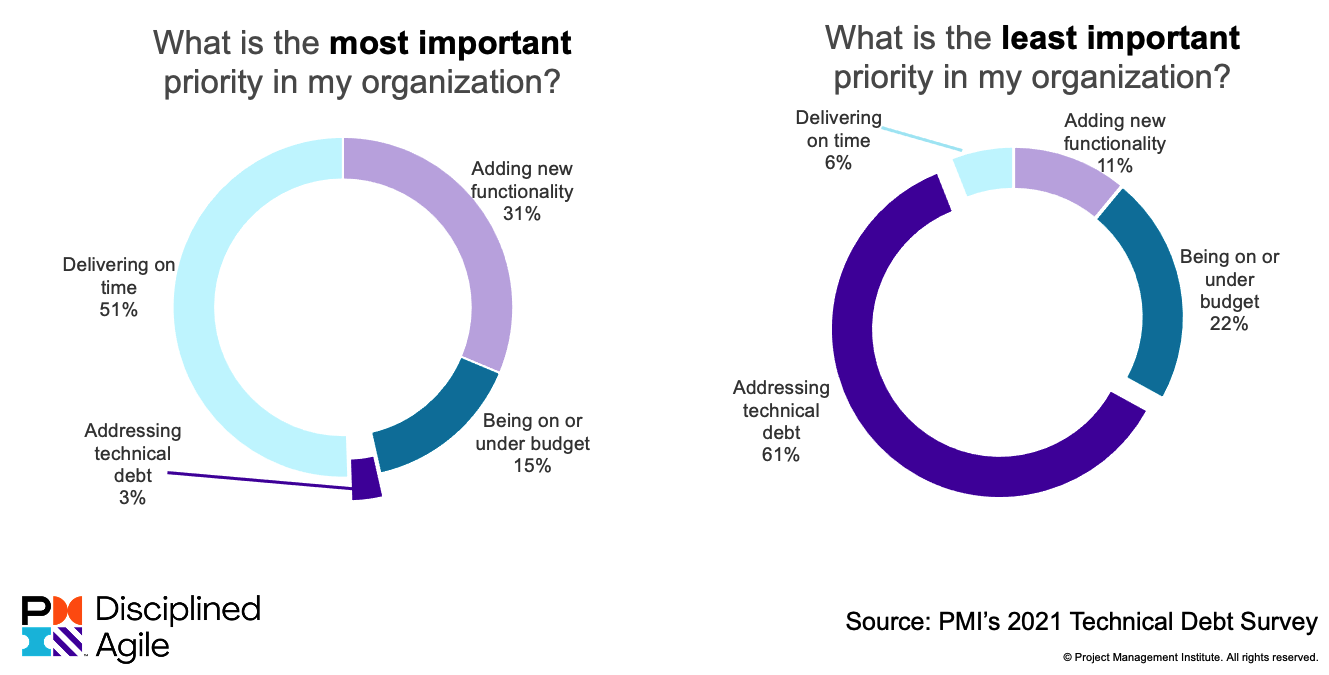

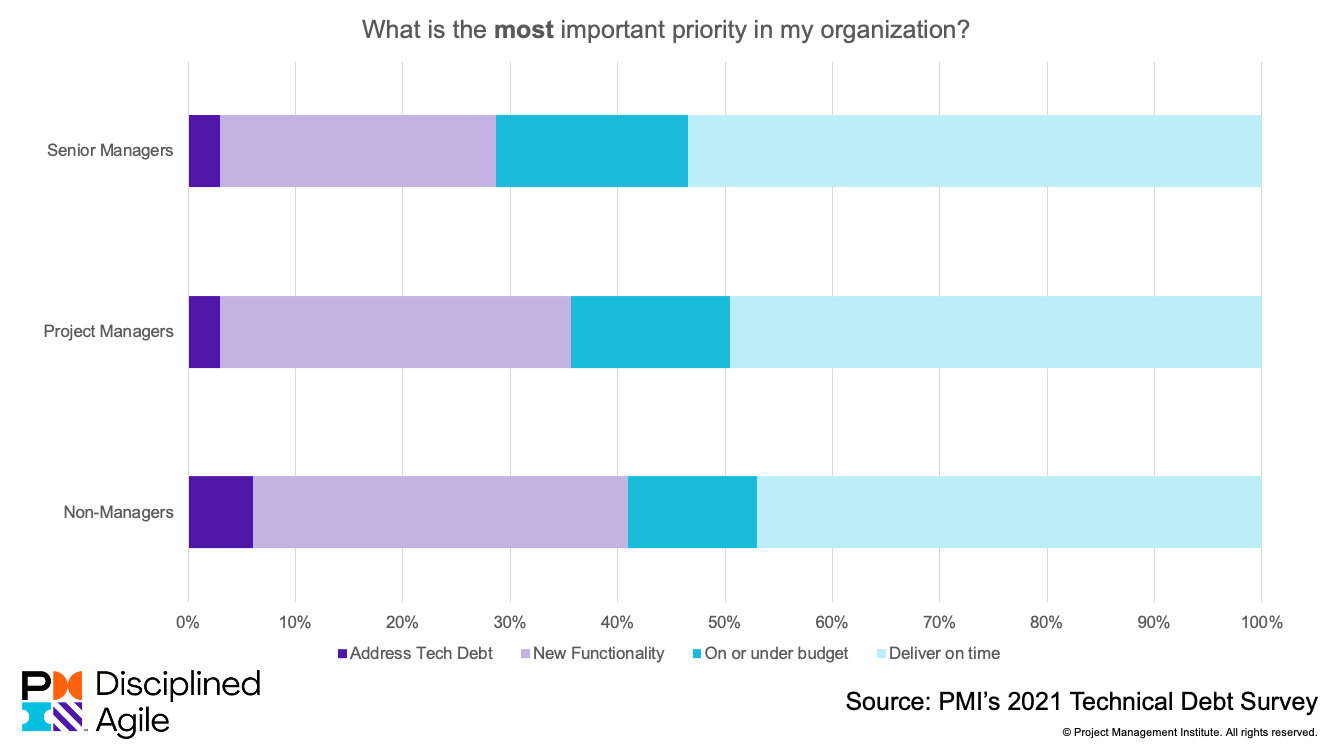

Addressing Technical Debt Isn't an Organizational PriorityThe survey included a question where people were asked to rank in order how they believed the importance of four issues are to their organization: Addressing technical debt, delivering on time/schedule, delivering on or under budget, and adding new functionality. Figure 1 summarizes which factor people believed was most important and which was least important and addressing technical debt faired poorly. Figure 2 explores differences in opinion between three groups - senior managers, project managers, and non-managers - about what is most important. Similarly, Figure 3 explores differences in opinion about what is least important.

Figure 1. Addressing technical debt is your organizations least important priority.

Figure 2. What is the most important priority for your organization's leadership?

Figure 3. What is the least important priority of your organization's leadership?

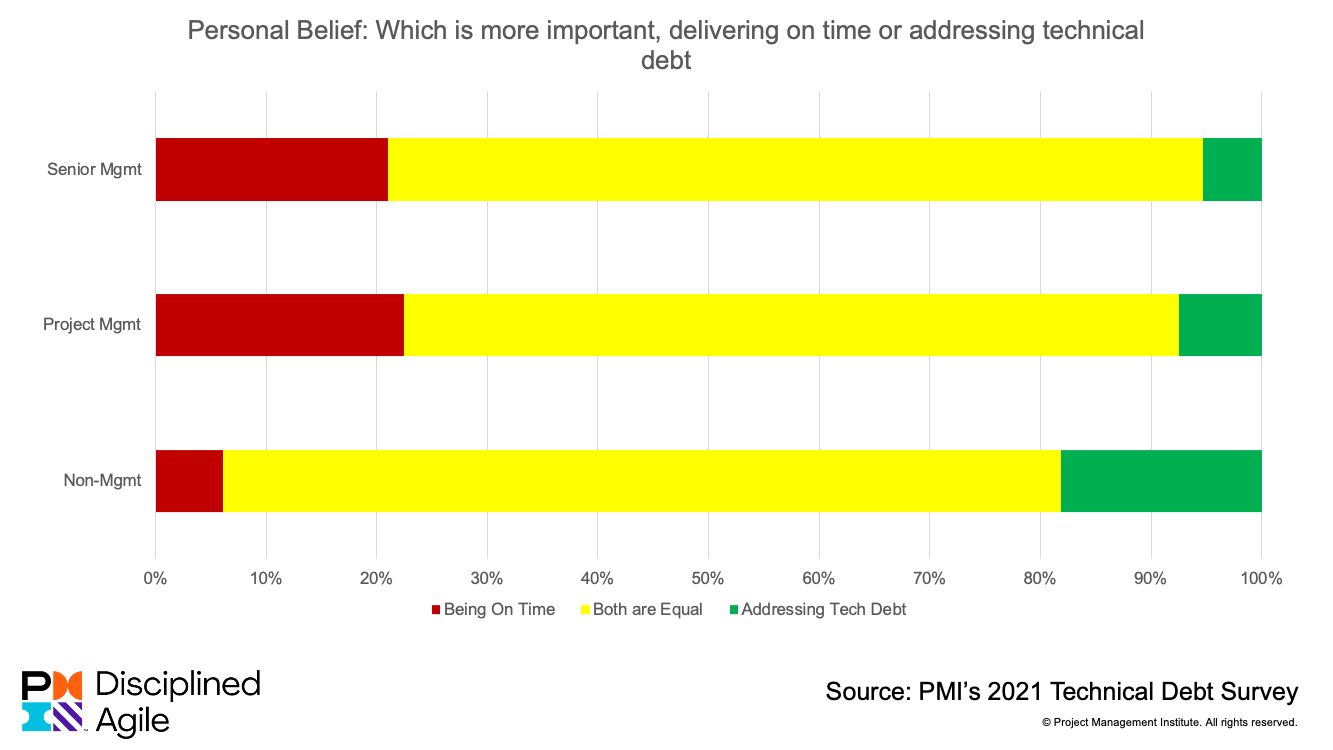

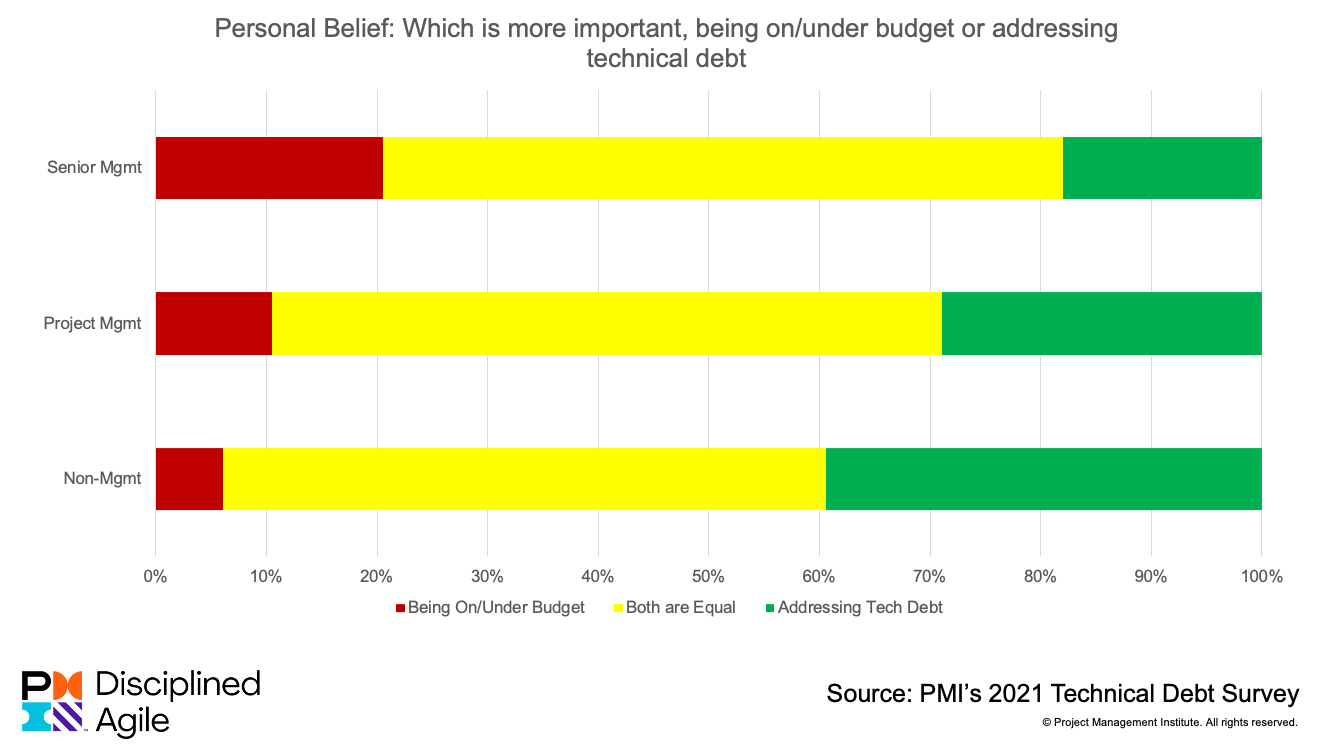

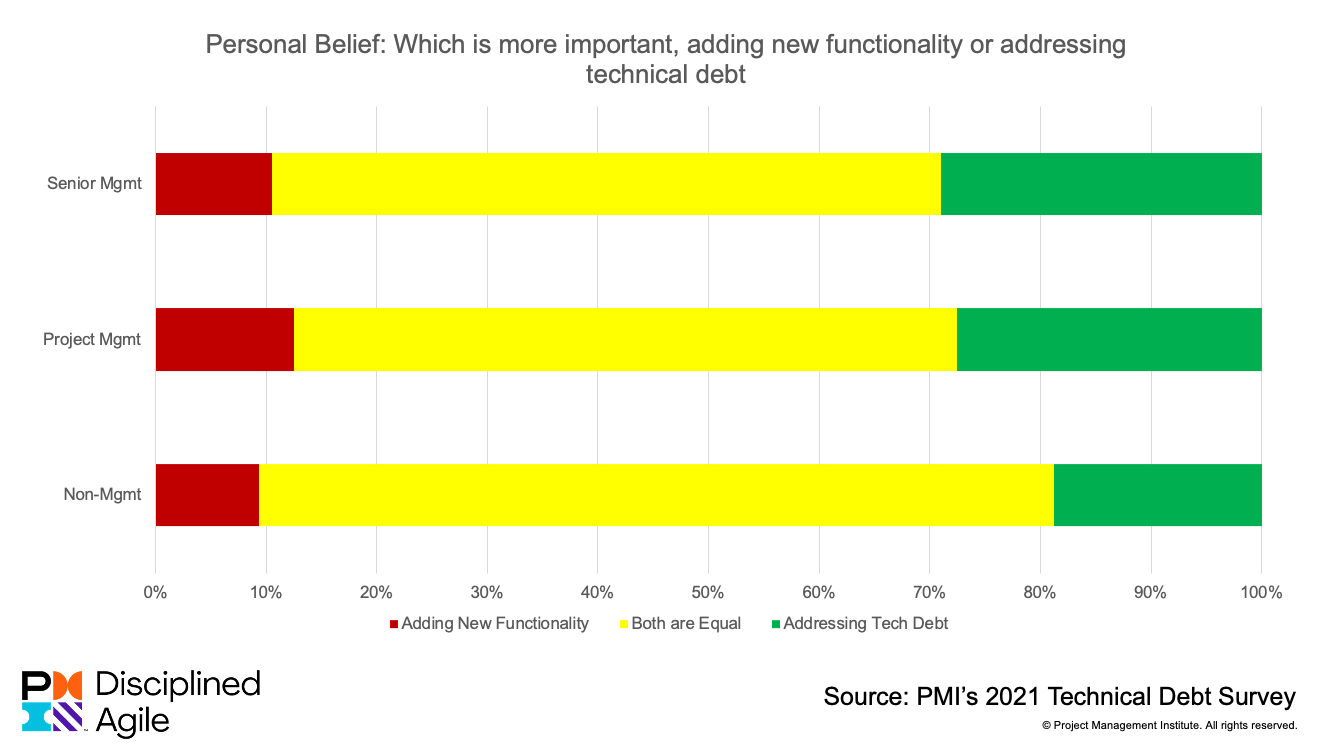

People Personally Believe Addressing Technical Debt is ImportantThe survey also included three questions that explored how people personally felt about how to prioritize addressing technical debt in comparison with other important management considerations. Figure 4 compares the way that people prioritize being on schedule versus addressing technical debt. Figure 5 compares the way that people prioritize being on budget versus addressing technical debt. Figure 6 compares the way that people prioritize delivering new functionality versus addressing technical debt.

Figure 4. Being on schedule vs. addressing technical debt.

Figure 5. Being on budget vs. addressing technical debt.

Figure 6. Adding new functionality vs. addressing technical debt.

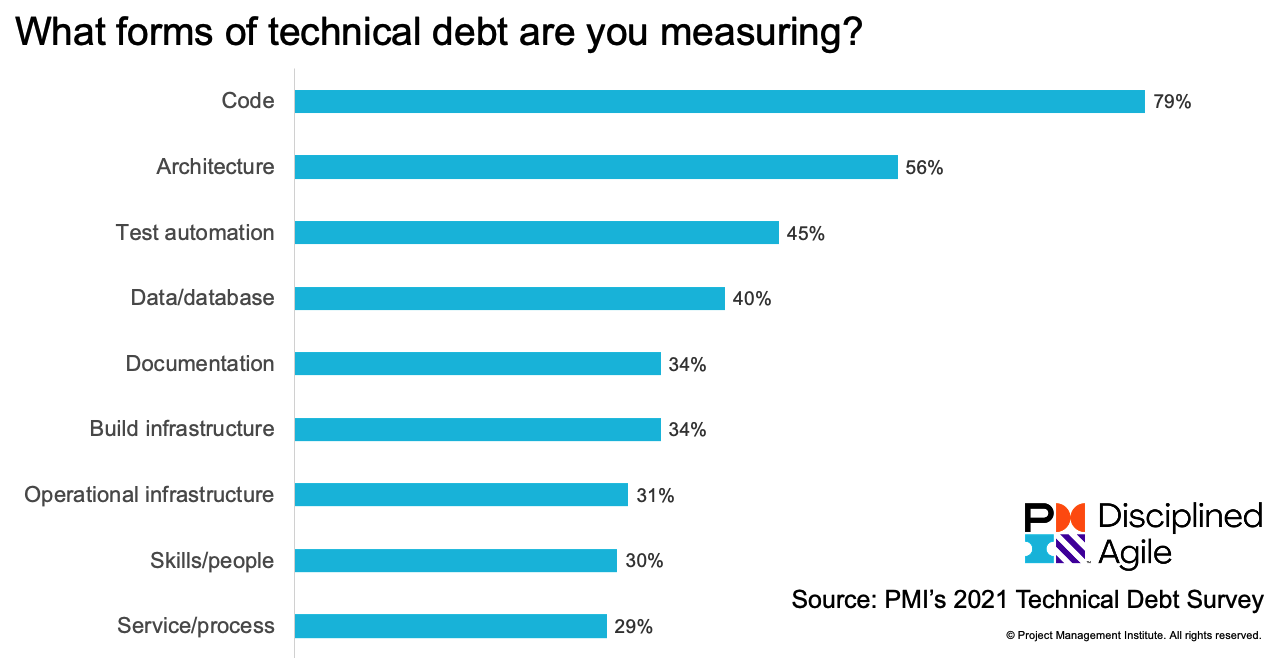

Technical Debt Measurement Needs to Become More RobustFigure 7 summarizes what forms of technical debt that organizations are measuring. Measuring technical debt in code is incredibly common because of the prevalence of tools and the fact that technical debt is often seen as a code issue. But as you see in Figure 7 there are many more opportunities to measure quality levels of various artifacts, but unfortunately not everyone is taking those opportunities. In Disciplined Agile (DA) we have two measurement-oriented process blades, Measure Outcomes and Organize Metrics that provide great insights for how to organize your metrics strategy.

Figure 7. Measuring technical debt.

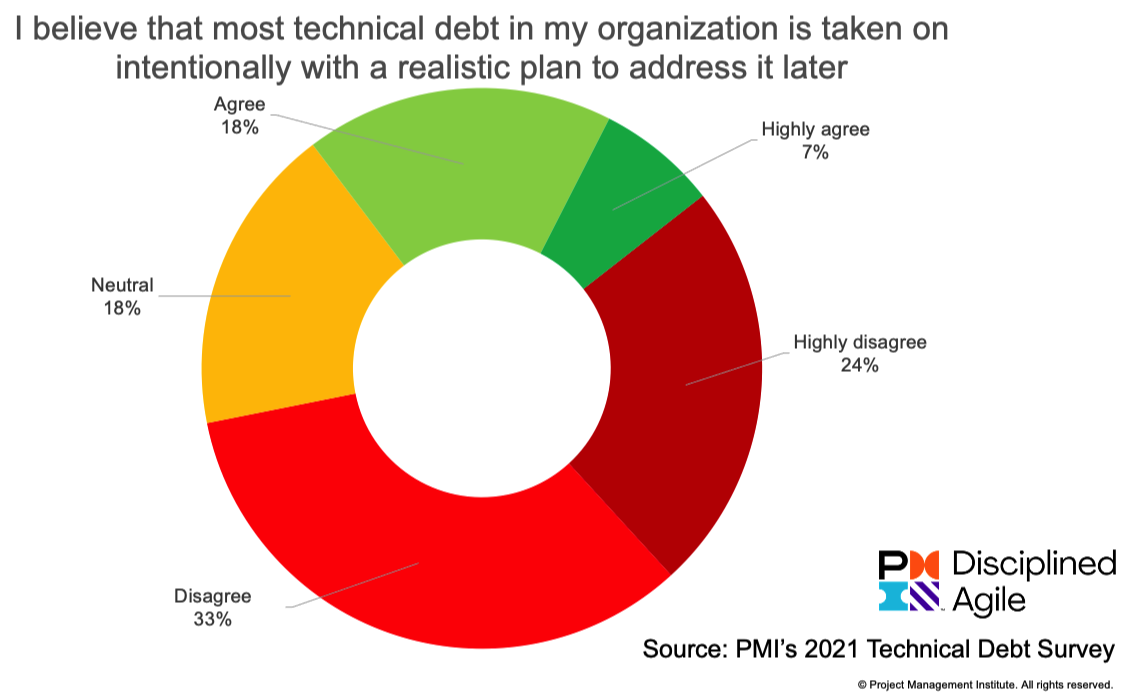

Is Technical Debt Taken on Intentionally or Accidentally?As Martin Fowler clearly points out with his Technical Debt Quadrant advice, you really want to take on technical debt intentionally. Intentional means that you take technical debt on in a prudent and deliberate manner where you understand the implications of doing so. Unfortunately, as you see in Figure 8, few organizations take on technical debt intentionally. This concerns me because it's a leading indicator that technical debt is getting out of control within organizations that aren't considering it intentionally.

Figure 8. Few organizations take on technical debt intentionally.

Technical Debt is a Management ProblemWe asked several questions about how well people agreed with certain statements. First the good news:

Now the bad news:

I believe that if we are ever to address technical debt effectively then management understanding about technical debt, and management behavior around it, needs to shift. My recommendation is to choose to deal with technical debt while you still have the opportunity to do so.

Technical Debt Resources

|

You Think Your Staff Wants to Go Back to the Office? Think Again.

|

Many organizations around the world are preparing to bring their staff back into the office. To be fair, they've been making these plans since the early days of the pandemic, and have continued to push back the return date as the situation became clearer to executive leadership. We’re now starting to see organizations coax their employees back into the office in the parts of the world where vaccinations are prevalent. But do employees want to go back? Or, more pertinently, to what extent do they want to go back to the office? I ran a survey from late April through May 2021 to explore what people were thinking at the time. Although the data is a bit old now, it reveals some insights that I believe still hold true.

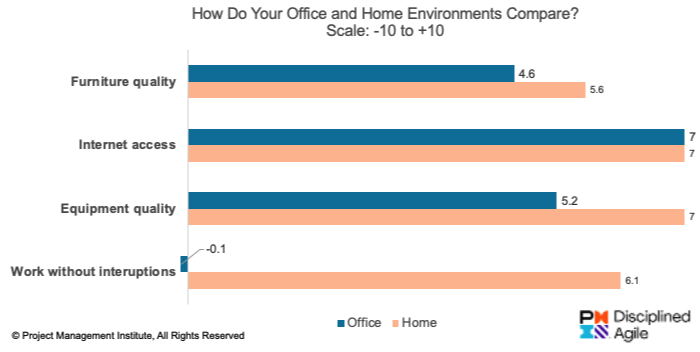

Why Do People Want to Return to the Office?There are several common reasons why some people want to to return to the office. First, some people don't have home office space. Not everyone has a home office that they can work from, but instead have to make do with the kitchen table or a tiny computer table in some corner. That's not fun. However, as you can see in Figure 1, survey respondents indicated on average that the quality of their furniture and office equipment was a bit better at home than at their office, and their internet quality was the same.

Figure 1. Comparing home and office environments.

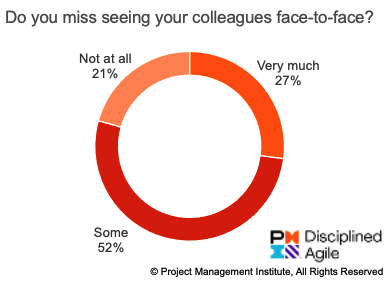

Also, it's nice to get away. Although they love their families dearly, it is nice to get away from them from time to time. Similarly, some people want to return to the office for companionship. Our workplaces are social places where we catch up with friends and colleagues, we share together, we eat and drink together, and where we have fun together. This is important and desirable for many people, as we see in Figure 2.

Figure 2. Do you miss your colleagues?

The reality is that some work is best done face-to-face (F2F). Strategizing, planning and designing are all activities that are best done collaboratively. Ideally these are done F2F in large Obeya rooms that have whiteboard walls and little or no furniture. Such rooms enable and motivate you to move around and work together, rather than sit around and talk at each other. More on this below. Roughly one quarter of survey respondents indicated that they missed face-to-face (F2F) interaction with their colleagues very much, and half indicated that they somewhat missed it. Finally, remote working has extended the work day. An unfortunate side effect of remote work is that you're seen as always available, a problem that is exacerbated when you work with people in other time zones. Some people may believe that this will change once you're back in the office. It won't.

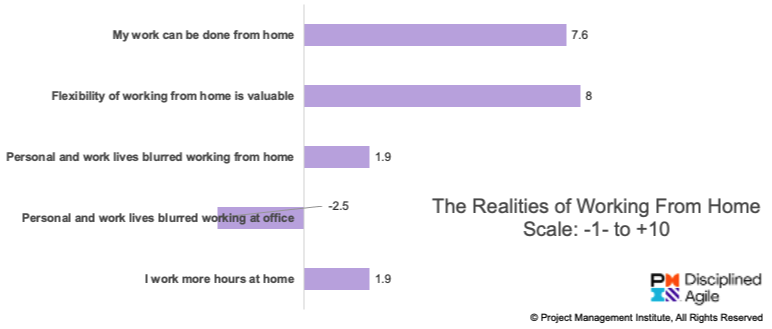

Why Do People Want to Continue Working From Home?There are also several common reasons why people don't want to return to the office. First, many people have learned that they prefer to work from home. Survey respondents were very positive that it is possible for their work to be done at home, which makes sense given that they had been working from home throughout the pandemic. They were also very positive on average about the flexibility of working from home. Both of these issues are obvious motivators to not return to the office. See Figure 3 for some insights. Figure 3. Realities of working from home.

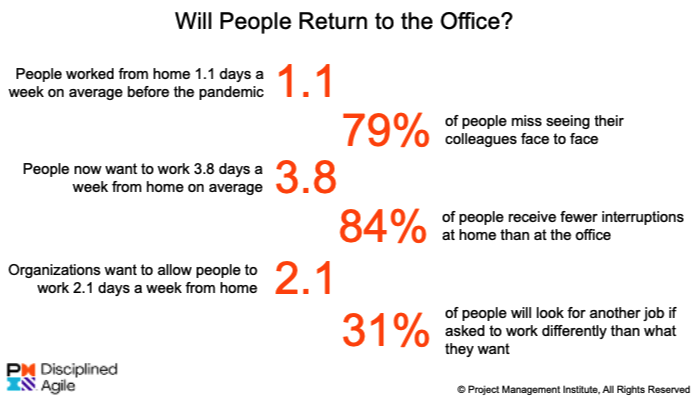

Another advantage is that remote teams are more diverse and inclusive. When teams are capable of working remotely, they are also able to include people from a greater range of locations, a greater range of abilities and a greater range of cultures. Although this may be uncomfortable for some at first, the opportunities to learn and to grow as a result soon prove to be enjoyable. Many people have invested in their home office, as implied by Figure 1. How many people do you know who have bought an ergonomic chair? Or a standing table? Or have reworked their guest room into a home office? Anyone who has made this sort of investment is going to be very reticent to come back into your office any time soon. My experience is that everyone will soon remember the joys of commuting. The allure of returning to the office to see your friends and colleagues quickly pales in comparison to the 45-minute commute each way to do so. Granted, the savings from reduced commuting are often taken back by more collaboration calls or a greater range of timing of such calls throughout the day. Having said that, a return to the office very likely means you'll have both a commute plus the additional range of meeting times. A great side effect of videoconferencing is that everyone has a voice, not just the people in the office. Remember when you would have meetings where a few people would dial in? Remember how they were often forgotten by the people in the room, or had to struggle to have a voice in the conversation? When everyone is videoconferencing it's a level playing field, and you have the opportunity for a more diverse conversation. Finally, convincing people to work a few days a week in the office is harder to pull off than you think. A critical issue for organizations is how much of a hybrid working model – in which people work from home some days and work in the office on some days - they need to support in the future. Before the COVID-19 pandemic, respondents indicated that they worked from home 1.1 days per week on average and only 19 percent of respondents indicated they worked 3 or more days a week from home. Today’s reality is very different. When asked how many days per week they would like to continue working from home, the average was 3.8 days a week with only 19 percent saying they’d like to work in the office 3 or more days a week. These results are similar to that of a Royal Society for Public Health (UK) study that found that about 5% of people worked from home before COVID-19 and now around 6% want to go back to the office full time. In short, during the pandemic, a large percentage of people have learned that they prefer to work from home.

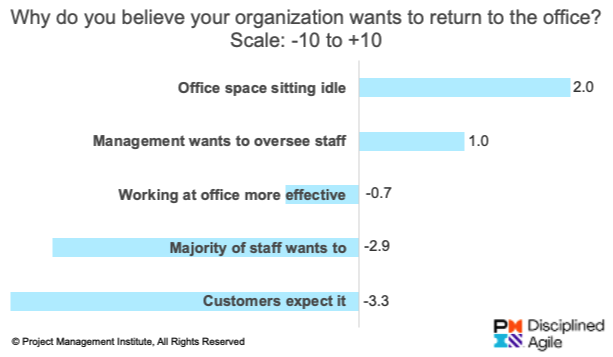

There’s a Disconnect Between Employers and EmployeesThe survey explored what people believed their organizations wanted to do, and why they were doing so, summarized in Figure 4.

Figure 4. Why does your organization want you to return to the office?

A key issue was how many days a week they believed their organization would allow people to work from home, the answer being 2.1 days a week on average. Compared with the 3.8 days a week that people would like to work from home, this is significantly different. PwC’s US Remote Work Survey also found this disconnect between the desire of organizations and the intent of employees. This difference may prove to be a significant point of contention when organizations start asking their people to return to the office.

Figure 5. Interesting results from the study.

Executives were a bit more optimistic, thinking 2.7 days per week on average. This is interesting giving that they hope to work at home 3.8 days per week themselves. Either this is an indication that the executives answering the survey aren’t in the loop regarding this decision or they believe the rule won’t apply to them. I’ll leave you to be the judge of that.

Many People Will Choose to Vote with Their FeetHere’s the big news. The survey asked people what they would do if their organizations asked them to work differently than what they wanted to do. For example, the respondent wants to continue working from home, but their organization wants them to come back into the office at least several days a week. As you see in Figure 6, 31 percent of people claim that they will look for another job.

Figure 6. What will people do?

Interestingly, a Global Workplace Analytics study found that 37 percent of people would take a 10 percent pay cut to work from home. Achievers Workforce Institute’s fourth annual Engagement and Retention Report reveals a spike in employees intending to job hunt in 2021. Organizations may be in for some rough times as they struggle to retain staff. In March 2022, Pew Research published Majority of workers who quit a job in 2021 cite low pay, no opportunities for advancement, feeling disrespected which summarizes the results of their survey of nearly 1000 people who voluntarily left their jobs in 2021. In other words, it explored the beginnings of "The Great Resignation." They found that:

The New Normal Won’t Be the Old Normal, Get Over ItWe all want things to go back to “normal.” The challenge is that our “new normal” will prove to be very different than our old normal. This study found that there is a significant difference between how we as individuals hope our new normal will be and what our organizations appear to want our new normal to be. My advice is to start to have honest - and potentially hard - conversations now to collaboratively define a shared vision of what you want your new normal to be. This is a time of change, and change can be uncomfortable. I recommend that you take this opportunity to choose a new WoW, to choose a new way to use your office space, and thereby work smarter.

MethodologyFrom late April through May 2021, I [Scott Ambler] ran an informal survey that received 174 responses. 138 respondents were employees working from home, 32 were contractors/consultants working from home, and four responded other and were not included in results of the survey. 44% of respondents indicated that they were office workers or professionals; 41% of respondents were middle management; and 13% executive leadership. Regarding location, 46% of respondents were from North America, 30% were from Europe, and 12% from Latin America. I’ve been running similar studies since 2006, first for Dr. Dobb’s Journal and later for Ambysoft Inc. and Disciplined Agile Inc. I still maintain a page where I share the results of this work, including the source data, at Ambysoft IT Surveys.

|

Contracts, Procurement, Vendors, and Agile

|

This webinar centered on experiences applying LAP and agile contracts in practice. Together we explored:

If you click on Lean Agile Procurement (LAP), Agile Contracts, & Disciplined Agile (DA) you can earn one PDU by watching the recording.

|

Disciplined Agile 5.4 Released

Categories:

Disciplined Agile

Categories: Disciplined Agile

| We just released DA 5.4 today. The release notes are published here. Several interesting points:

My question to you: What would you prefer we focus on for the DA 5.5 release? Should we finish the goal diagrams for the other process blades, or should we focus on documenting the details behind the diagrams we have? Depth or breadth? |

On February 15, 2022 Mirko Kleiner of the Lean Agile Procurement (LAP) Alliance and myself gave a webinar entitled

On February 15, 2022 Mirko Kleiner of the Lean Agile Procurement (LAP) Alliance and myself gave a webinar entitled